Background

As AI gets embedded into more decisions that affect people's lives, most products assume users will trust it. But trust isn't a given. It's built, withheld, and highly dependent on context.

This study set out to understand how people actually form trust in AI versus human agents, and what that means for the products we design.

Research Question and Hypothesis

The Problem

Why this research matters

AI-powered experiences are being deployed across healthcare, finance, social services, and civic systems, often in sensitive, high-stakes contexts. Many of these products are designed around capability, not perception.

But if users don't trust an AI system in the context it's being used, capability is irrelevant. The product fails before the feature ever runs.

Without clarity on how trust is formed or withheld across different situations, designers and product teams are building on assumptions rather than evidence.

Research Question Is there a meaningful difference in how people trust AI compared to humans, and does that difference shift depending on the type of task?

Hypothesis Participants would report lower trust in AI than in humans overall, with the gap widening for tasks involving judgment, emotion, or personal context.

Study Design

What the Data Showed

How the research was structured

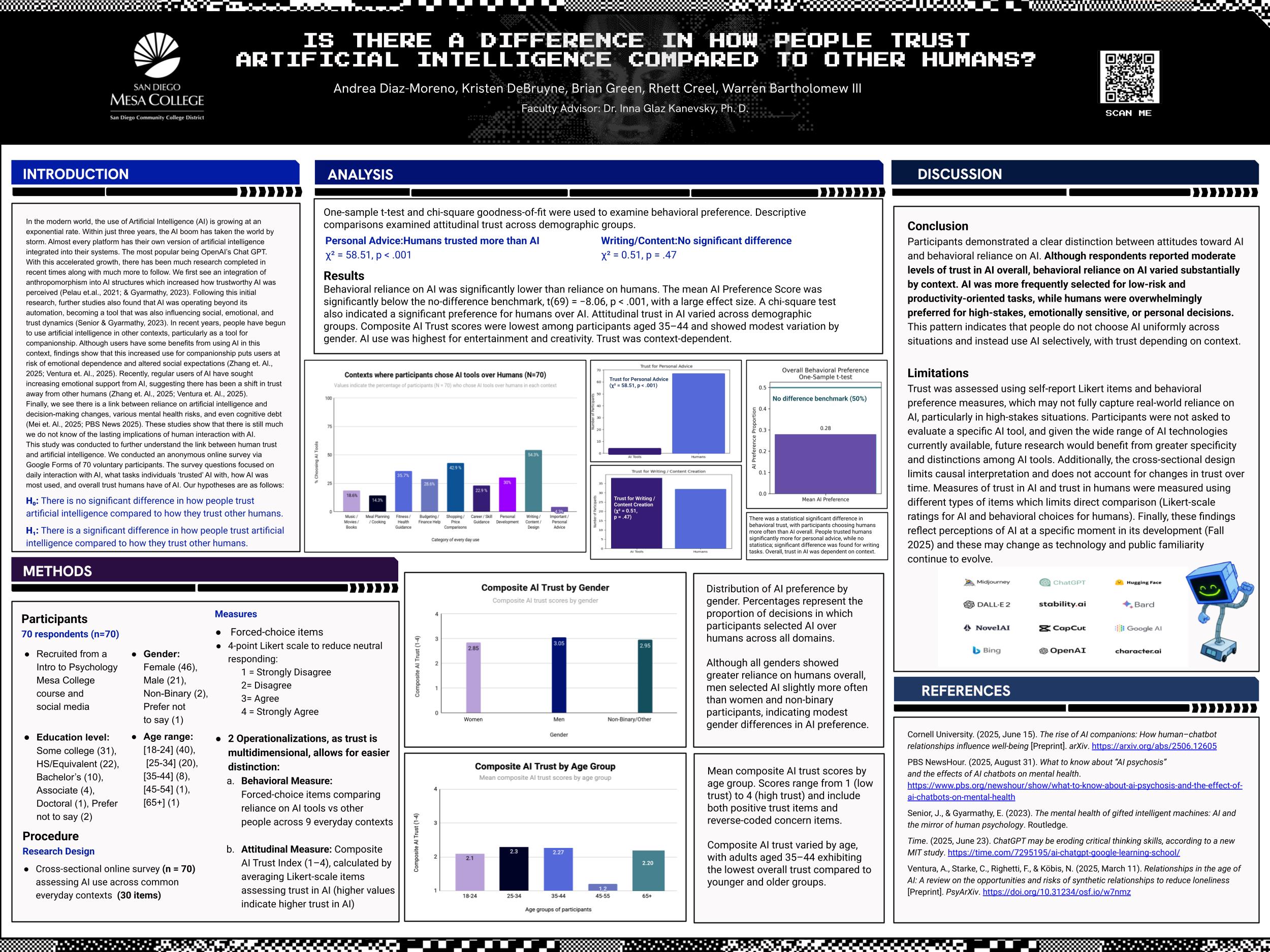

This was a quantitative survey study comparing trust perceptions toward AI and human agents across multiple real-world scenarios.

Participants represented a range of ages and backgrounds, allowing for comparison across trust contexts rather than demographic segmentation. Survey responses used Likert-scale trust measures and were analyzed comparatively across task categories.

The scenarios were designed to span a spectrum from objective and data-driven to subjective and emotionally sensitive, because that range was where the hypothesis predicted the most meaningful variation.

Research Question and Hypothesis

Research Question Is there a meaningful difference in how people trust AI compared to humans, and does that difference shift depending on the type of task?

Hypothesis Participants would report lower trust in AI than in humans overall, with the gap widening for tasks involving judgment, emotion, or personal context.

Study Design

How the research was structured

This was a quantitative survey study comparing trust perceptions toward AI and human agents across multiple real-world scenarios.

Participants represented a range of ages and backgrounds, allowing for comparison across trust contexts rather than demographic segmentation. Survey responses used Likert-scale trust measures and were analyzed comparatively across task categories.

The scenarios were designed to span a spectrum from objective and data-driven to subjective and emotionally sensitive, because that range was where the hypothesis predicted the most meaningful variation.

3 findings with direct design implications

1. Trust in AI is context-dependent. Participants expressed meaningfully higher trust in AI for objective, data-driven tasks. That trust dropped significantly when tasks involved nuance, subjectivity, or emotional stakes. AI isn't universally distrusted — it's situationally distrusted.

2. Humans are trusted more in ambiguous situations. When a task required empathy, judgment, or interpretation, participants consistently favored human agents. The more a situation felt personal, the more a human presence mattered.

3. Transparency shifts willingness to trust. Participants reported greater openness to AI when its limitations and oversight structures were clearly communicated. Transparency didn't eliminate skepticism, but it meaningfully reduced resistance.

What This Means for Design

What the Data Showed

Translating findings into practice

These findings aren't abstract. They point to concrete design decisions that teams building AI-powered products should be making:

Don't position AI as an authority in sensitive contexts. Users will resist it, and that resistance is well-founded.

Communicate scope and limitations clearly. Transparency is a trust-building feature, not a disclaimer.

Build in human oversight where trust is fragile. Especially in civic, health, and social service contexts where the stakes of a wrong decision are high.

Align AI capabilities with user expectations. A system that does more than users expect it to can feel just as untrustworthy as one that does less.

The central design principle this research points to: contextual humility matters more than capability maximization.

What the Data Showed

3 findings with direct design implications

1. Trust in AI is context-dependent. Participants expressed meaningfully higher trust in AI for objective, data-driven tasks. That trust dropped significantly when tasks involved nuance, subjectivity, or emotional stakes. AI isn't universally distrusted — it's situationally distrusted.

2. Humans are trusted more in ambiguous situations. When a task required empathy, judgment, or interpretation, participants consistently favored human agents. The more a situation felt personal, the more a human presence mattered.

3. Transparency shifts willingness to trust. Participants reported greater openness to AI when its limitations and oversight structures were clearly communicated. Transparency didn't eliminate skepticism, but it meaningfully reduced resistance.

What This Means for Design

Translating findings into practice

These findings aren't abstract. They point to concrete design decisions that teams building AI-powered products should be making:

Don't position AI as an authority in sensitive contexts. Users will resist it, and that resistance is well-founded.

Communicate scope and limitations clearly. Transparency is a trust-building feature, not a disclaimer.

Build in human oversight where trust is fragile. Especially in civic, health, and social service contexts where the stakes of a wrong decision are high.

Align AI capabilities with user expectations. A system that does more than users expect it to can feel just as untrustworthy as one that does less.

The central design principle this research points to: contextual humility matters more than capability maximization.

What This Means for Design

3 findings with direct design implications

1. Trust in AI is context-dependent. Participants expressed meaningfully higher trust in AI for objective, data-driven tasks. That trust dropped significantly when tasks involved nuance, subjectivity, or emotional stakes. AI isn't universally distrusted — it's situationally distrusted.

2. Humans are trusted more in ambiguous situations. When a task required empathy, judgment, or interpretation, participants consistently favored human agents. The more a situation felt personal, the more a human presence mattered.

3. Transparency shifts willingness to trust. Participants reported greater openness to AI when its limitations and oversight structures were clearly communicated. Transparency didn't eliminate skepticism, but it meaningfully reduced resistance.

How the research was structured

This was a quantitative survey study comparing trust perceptions toward AI and human agents across multiple real-world scenarios.

Participants represented a range of ages and backgrounds, allowing for comparison across trust contexts rather than demographic segmentation. Survey responses used Likert-scale trust measures and were analyzed comparatively across task categories.

The scenarios were designed to span a spectrum from objective and data-driven to subjective and emotionally sensitive, because that range was where the hypothesis predicted the most meaningful variation.

Reflection

Reflection

Why this research transfers beyond the classroom

This was an academic study, and I want to be clear about what that means: the findings are directional, not definitive. A larger, more diverse sample and longitudinal follow-up would be needed to draw stronger conclusions.

That said, the core insight holds up against broader literature on human-AI interaction: trust is contextual, transparency matters, and designing for user perception is not the same as designing for technical performance.

For me, this study sharpened something I carry into every project involving AI or data-driven systems. The question is never just "does this work?" It's "will the people it's meant to serve actually trust it enough to use it?" Those are different design problems, and conflating them is where a lot of AI products go wrong.

Why this research transfers beyond the classroom

This was an academic study, and I want to be clear about what that means: the findings are directional, not definitive. A larger, more diverse sample and longitudinal follow-up would be needed to draw stronger conclusions.

That said, the core insight holds up against broader literature on human-AI interaction: trust is contextual, transparency matters, and designing for user perception is not the same as designing for technical performance.

For me, this study sharpened something I carry into every project involving AI or data-driven systems. The question is never just "does this work?" It's "will the people it's meant to serve actually trust it enough to use it?" Those are different design problems, and conflating them is where a lot of AI products go wrong.

Reflection

Why this research transfers beyond the classroom

This was an academic study, and I want to be clear about what that means: the findings are directional, not definitive. A larger, more diverse sample and longitudinal follow-up would be needed to draw stronger conclusions.

That said, the core insight holds up against broader literature on human-AI interaction: trust is contextual, transparency matters, and designing for user perception is not the same as designing for technical performance.

For me, this study sharpened something I carry into every project involving AI or data-driven systems. The question is never just "does this work?" It's "will the people it's meant to serve actually trust it enough to use it?" Those are different design problems, and conflating them is where a lot of AI products go wrong.

Background

As AI gets embedded into more decisions that affect people's lives, most products assume users will trust it. But trust isn't a given. It's built, withheld, and highly dependent on context.

This study set out to understand how people actually form trust in AI versus human agents, and what that means for the products we design.

Why this research matters

AI-powered experiences are being deployed across healthcare, finance, social services, and civic systems, often in sensitive, high-stakes contexts. Many of these products are designed around capability, not perception.

But if users don't trust an AI system in the context it's being used, capability is irrelevant. The product fails before the feature ever runs.

Without clarity on how trust is formed or withheld across different situations, designers and product teams are building on assumptions rather than evidence.

As AI gets embedded into more decisions that affect people's lives, most products assume users will trust it. But trust isn't a given. It's built, withheld, and highly dependent on context.

This study set out to understand how people actually form trust in AI versus human agents, and what that means for the products we design.

Research Question and Hypothesis

Research Question Is there a meaningful difference in how people trust AI compared to humans, and does that difference shift depending on the type of task?

Hypothesis Participants would report lower trust in AI than in humans overall, with the gap widening for tasks involving judgment, emotion, or personal context.

Study Design

How the research was structured

This was a quantitative survey study comparing trust perceptions toward AI and human agents across multiple real-world scenarios.

Participants represented a range of ages and backgrounds, allowing for comparison across trust contexts rather than demographic segmentation. Survey responses used Likert-scale trust measures and were analyzed comparatively across task categories.

The scenarios were designed to span a spectrum from objective and data-driven to subjective and emotionally sensitive, because that range was where the hypothesis predicted the most meaningful variation.

The Problem

What the Data Showed

3 findings with direct design implications

1. Trust in AI is context-dependent. Participants expressed meaningfully higher trust in AI for objective, data-driven tasks. That trust dropped significantly when tasks involved nuance, subjectivity, or emotional stakes. AI isn't universally distrusted — it's situationally distrusted.

2. Humans are trusted more in ambiguous situations. When a task required empathy, judgment, or interpretation, participants consistently favored human agents. The more a situation felt personal, the more a human presence mattered.

3. Transparency shifts willingness to trust. Participants reported greater openness to AI when its limitations and oversight structures were clearly communicated. Transparency didn't eliminate skepticism, but it meaningfully reduced resistance.

What This Means for Design

Translating findings into practice

These findings aren't abstract. They point to concrete design decisions that teams building AI-powered products should be making:

Don't position AI as an authority in sensitive contexts. Users will resist it, and that resistance is well-founded.

Communicate scope and limitations clearly. Transparency is a trust-building feature, not a disclaimer.

Build in human oversight where trust is fragile. Especially in civic, health, and social service contexts where the stakes of a wrong decision are high.

Align AI capabilities with user expectations. A system that does more than users expect it to can feel just as untrustworthy as one that does less.

The central design principle this research points to: contextual humility matters more than capability maximization.

Reflection

Why this research transfers beyond the classroom

This was an academic study, and I want to be clear about what that means: the findings are directional, not definitive. A larger, more diverse sample and longitudinal follow-up would be needed to draw stronger conclusions.

That said, the core insight holds up against broader literature on human-AI interaction: trust is contextual, transparency matters, and designing for user perception is not the same as designing for technical performance.

For me, this study sharpened something I carry into every project involving AI or data-driven systems. The question is never just "does this work?" It's "will the people it's meant to serve actually trust it enough to use it?" Those are different design problems, and conflating them is where a lot of AI products go wrong.

Why this research matters

AI-powered experiences are being deployed across healthcare, finance, social services, and civic systems, often in sensitive, high-stakes contexts. Many of these products are designed around capability, not perception.

But if users don't trust an AI system in the context it's being used, capability is irrelevant. The product fails before the feature ever runs.

Without clarity on how trust is formed or withheld across different situations, designers and product teams are building on assumptions rather than evidence.

Background

As AI gets embedded into more decisions that affect people's lives, most products assume users will trust it. But trust isn't a given. It's built, withheld, and highly dependent on context.

This study set out to understand how people actually form trust in AI versus human agents, and what that means for the products we design.

Research Question and Hypothesis

The Problem

Why this research matters

AI-powered experiences are being deployed across healthcare, finance, social services, and civic systems, often in sensitive, high-stakes contexts. Many of these products are designed around capability, not perception.

But if users don't trust an AI system in the context it's being used, capability is irrelevant. The product fails before the feature ever runs.

Without clarity on how trust is formed or withheld across different situations, designers and product teams are building on assumptions rather than evidence.

Research Question Is there a meaningful difference in how people trust AI compared to humans, and does that difference shift depending on the type of task?

Hypothesis Participants would report lower trust in AI than in humans overall, with the gap widening for tasks involving judgment, emotion, or personal context.

Study Design

What the Data Showed

How the research was structured

This was a quantitative survey study comparing trust perceptions toward AI and human agents across multiple real-world scenarios.

Participants represented a range of ages and backgrounds, allowing for comparison across trust contexts rather than demographic segmentation. Survey responses used Likert-scale trust measures and were analyzed comparatively across task categories.

The scenarios were designed to span a spectrum from objective and data-driven to subjective and emotionally sensitive, because that range was where the hypothesis predicted the most meaningful variation.

Research Question and Hypothesis

Research Question Is there a meaningful difference in how people trust AI compared to humans, and does that difference shift depending on the type of task?

Hypothesis Participants would report lower trust in AI than in humans overall, with the gap widening for tasks involving judgment, emotion, or personal context.

Study Design

How the research was structured

This was a quantitative survey study comparing trust perceptions toward AI and human agents across multiple real-world scenarios.

Participants represented a range of ages and backgrounds, allowing for comparison across trust contexts rather than demographic segmentation. Survey responses used Likert-scale trust measures and were analyzed comparatively across task categories.

The scenarios were designed to span a spectrum from objective and data-driven to subjective and emotionally sensitive, because that range was where the hypothesis predicted the most meaningful variation.

3 findings with direct design implications

1. Trust in AI is context-dependent. Participants expressed meaningfully higher trust in AI for objective, data-driven tasks. That trust dropped significantly when tasks involved nuance, subjectivity, or emotional stakes. AI isn't universally distrusted — it's situationally distrusted.

2. Humans are trusted more in ambiguous situations. When a task required empathy, judgment, or interpretation, participants consistently favored human agents. The more a situation felt personal, the more a human presence mattered.

3. Transparency shifts willingness to trust. Participants reported greater openness to AI when its limitations and oversight structures were clearly communicated. Transparency didn't eliminate skepticism, but it meaningfully reduced resistance.

What This Means for Design

What the Data Showed

Translating findings into practice

These findings aren't abstract. They point to concrete design decisions that teams building AI-powered products should be making:

Don't position AI as an authority in sensitive contexts. Users will resist it, and that resistance is well-founded.

Communicate scope and limitations clearly. Transparency is a trust-building feature, not a disclaimer.

Build in human oversight where trust is fragile. Especially in civic, health, and social service contexts where the stakes of a wrong decision are high.

Align AI capabilities with user expectations. A system that does more than users expect it to can feel just as untrustworthy as one that does less.

The central design principle this research points to: contextual humility matters more than capability maximization.

What the Data Showed

3 findings with direct design implications

1. Trust in AI is context-dependent. Participants expressed meaningfully higher trust in AI for objective, data-driven tasks. That trust dropped significantly when tasks involved nuance, subjectivity, or emotional stakes. AI isn't universally distrusted — it's situationally distrusted.

2. Humans are trusted more in ambiguous situations. When a task required empathy, judgment, or interpretation, participants consistently favored human agents. The more a situation felt personal, the more a human presence mattered.

3. Transparency shifts willingness to trust. Participants reported greater openness to AI when its limitations and oversight structures were clearly communicated. Transparency didn't eliminate skepticism, but it meaningfully reduced resistance.

What This Means for Design

Translating findings into practice

These findings aren't abstract. They point to concrete design decisions that teams building AI-powered products should be making:

Don't position AI as an authority in sensitive contexts. Users will resist it, and that resistance is well-founded.

Communicate scope and limitations clearly. Transparency is a trust-building feature, not a disclaimer.

Build in human oversight where trust is fragile. Especially in civic, health, and social service contexts where the stakes of a wrong decision are high.

Align AI capabilities with user expectations. A system that does more than users expect it to can feel just as untrustworthy as one that does less.

The central design principle this research points to: contextual humility matters more than capability maximization.

What This Means for Design

3 findings with direct design implications

1. Trust in AI is context-dependent. Participants expressed meaningfully higher trust in AI for objective, data-driven tasks. That trust dropped significantly when tasks involved nuance, subjectivity, or emotional stakes. AI isn't universally distrusted — it's situationally distrusted.

2. Humans are trusted more in ambiguous situations. When a task required empathy, judgment, or interpretation, participants consistently favored human agents. The more a situation felt personal, the more a human presence mattered.

3. Transparency shifts willingness to trust. Participants reported greater openness to AI when its limitations and oversight structures were clearly communicated. Transparency didn't eliminate skepticism, but it meaningfully reduced resistance.

How the research was structured

This was a quantitative survey study comparing trust perceptions toward AI and human agents across multiple real-world scenarios.

Participants represented a range of ages and backgrounds, allowing for comparison across trust contexts rather than demographic segmentation. Survey responses used Likert-scale trust measures and were analyzed comparatively across task categories.

The scenarios were designed to span a spectrum from objective and data-driven to subjective and emotionally sensitive, because that range was where the hypothesis predicted the most meaningful variation.

Reflection

Reflection

Why this research transfers beyond the classroom

This was an academic study, and I want to be clear about what that means: the findings are directional, not definitive. A larger, more diverse sample and longitudinal follow-up would be needed to draw stronger conclusions.

That said, the core insight holds up against broader literature on human-AI interaction: trust is contextual, transparency matters, and designing for user perception is not the same as designing for technical performance.

For me, this study sharpened something I carry into every project involving AI or data-driven systems. The question is never just "does this work?" It's "will the people it's meant to serve actually trust it enough to use it?" Those are different design problems, and conflating them is where a lot of AI products go wrong.

Why this research transfers beyond the classroom

This was an academic study, and I want to be clear about what that means: the findings are directional, not definitive. A larger, more diverse sample and longitudinal follow-up would be needed to draw stronger conclusions.

That said, the core insight holds up against broader literature on human-AI interaction: trust is contextual, transparency matters, and designing for user perception is not the same as designing for technical performance.

For me, this study sharpened something I carry into every project involving AI or data-driven systems. The question is never just "does this work?" It's "will the people it's meant to serve actually trust it enough to use it?" Those are different design problems, and conflating them is where a lot of AI products go wrong.

Reflection

Why this research transfers beyond the classroom

This was an academic study, and I want to be clear about what that means: the findings are directional, not definitive. A larger, more diverse sample and longitudinal follow-up would be needed to draw stronger conclusions.

That said, the core insight holds up against broader literature on human-AI interaction: trust is contextual, transparency matters, and designing for user perception is not the same as designing for technical performance.

For me, this study sharpened something I carry into every project involving AI or data-driven systems. The question is never just "does this work?" It's "will the people it's meant to serve actually trust it enough to use it?" Those are different design problems, and conflating them is where a lot of AI products go wrong.

Background

As AI gets embedded into more decisions that affect people's lives, most products assume users will trust it. But trust isn't a given. It's built, withheld, and highly dependent on context.

This study set out to understand how people actually form trust in AI versus human agents, and what that means for the products we design.

Why this research matters

AI-powered experiences are being deployed across healthcare, finance, social services, and civic systems, often in sensitive, high-stakes contexts. Many of these products are designed around capability, not perception.

But if users don't trust an AI system in the context it's being used, capability is irrelevant. The product fails before the feature ever runs.

Without clarity on how trust is formed or withheld across different situations, designers and product teams are building on assumptions rather than evidence.

As AI gets embedded into more decisions that affect people's lives, most products assume users will trust it. But trust isn't a given. It's built, withheld, and highly dependent on context.

This study set out to understand how people actually form trust in AI versus human agents, and what that means for the products we design.

Research Question and Hypothesis

Research Question Is there a meaningful difference in how people trust AI compared to humans, and does that difference shift depending on the type of task?

Hypothesis Participants would report lower trust in AI than in humans overall, with the gap widening for tasks involving judgment, emotion, or personal context.

Study Design

How the research was structured

This was a quantitative survey study comparing trust perceptions toward AI and human agents across multiple real-world scenarios.

Participants represented a range of ages and backgrounds, allowing for comparison across trust contexts rather than demographic segmentation. Survey responses used Likert-scale trust measures and were analyzed comparatively across task categories.

The scenarios were designed to span a spectrum from objective and data-driven to subjective and emotionally sensitive, because that range was where the hypothesis predicted the most meaningful variation.

The Problem

What the Data Showed

3 findings with direct design implications

1. Trust in AI is context-dependent. Participants expressed meaningfully higher trust in AI for objective, data-driven tasks. That trust dropped significantly when tasks involved nuance, subjectivity, or emotional stakes. AI isn't universally distrusted — it's situationally distrusted.

2. Humans are trusted more in ambiguous situations. When a task required empathy, judgment, or interpretation, participants consistently favored human agents. The more a situation felt personal, the more a human presence mattered.

3. Transparency shifts willingness to trust. Participants reported greater openness to AI when its limitations and oversight structures were clearly communicated. Transparency didn't eliminate skepticism, but it meaningfully reduced resistance.

What This Means for Design

Translating findings into practice

These findings aren't abstract. They point to concrete design decisions that teams building AI-powered products should be making:

Don't position AI as an authority in sensitive contexts. Users will resist it, and that resistance is well-founded.

Communicate scope and limitations clearly. Transparency is a trust-building feature, not a disclaimer.

Build in human oversight where trust is fragile. Especially in civic, health, and social service contexts where the stakes of a wrong decision are high.

Align AI capabilities with user expectations. A system that does more than users expect it to can feel just as untrustworthy as one that does less.

The central design principle this research points to: contextual humility matters more than capability maximization.

Reflection

Why this research transfers beyond the classroom

This was an academic study, and I want to be clear about what that means: the findings are directional, not definitive. A larger, more diverse sample and longitudinal follow-up would be needed to draw stronger conclusions.

That said, the core insight holds up against broader literature on human-AI interaction: trust is contextual, transparency matters, and designing for user perception is not the same as designing for technical performance.

For me, this study sharpened something I carry into every project involving AI or data-driven systems. The question is never just "does this work?" It's "will the people it's meant to serve actually trust it enough to use it?" Those are different design problems, and conflating them is where a lot of AI products go wrong.

Why this research matters

AI-powered experiences are being deployed across healthcare, finance, social services, and civic systems, often in sensitive, high-stakes contexts. Many of these products are designed around capability, not perception.

But if users don't trust an AI system in the context it's being used, capability is irrelevant. The product fails before the feature ever runs.

Without clarity on how trust is formed or withheld across different situations, designers and product teams are building on assumptions rather than evidence.

Background

As AI gets embedded into more decisions that affect people's lives, most products assume users will trust it. But trust isn't a given. It's built, withheld, and highly dependent on context.

This study set out to understand how people actually form trust in AI versus human agents, and what that means for the products we design.

Research Question and Hypothesis

The Problem

Why this research matters

AI-powered experiences are being deployed across healthcare, finance, social services, and civic systems, often in sensitive, high-stakes contexts. Many of these products are designed around capability, not perception.

But if users don't trust an AI system in the context it's being used, capability is irrelevant. The product fails before the feature ever runs.

Without clarity on how trust is formed or withheld across different situations, designers and product teams are building on assumptions rather than evidence.

Research Question Is there a meaningful difference in how people trust AI compared to humans, and does that difference shift depending on the type of task?

Hypothesis Participants would report lower trust in AI than in humans overall, with the gap widening for tasks involving judgment, emotion, or personal context.

Study Design

What the Data Showed

How the research was structured

This was a quantitative survey study comparing trust perceptions toward AI and human agents across multiple real-world scenarios.

Participants represented a range of ages and backgrounds, allowing for comparison across trust contexts rather than demographic segmentation. Survey responses used Likert-scale trust measures and were analyzed comparatively across task categories.

The scenarios were designed to span a spectrum from objective and data-driven to subjective and emotionally sensitive, because that range was where the hypothesis predicted the most meaningful variation.

Research Question and Hypothesis

Research Question Is there a meaningful difference in how people trust AI compared to humans, and does that difference shift depending on the type of task?

Hypothesis Participants would report lower trust in AI than in humans overall, with the gap widening for tasks involving judgment, emotion, or personal context.

Study Design

How the research was structured

This was a quantitative survey study comparing trust perceptions toward AI and human agents across multiple real-world scenarios.

Participants represented a range of ages and backgrounds, allowing for comparison across trust contexts rather than demographic segmentation. Survey responses used Likert-scale trust measures and were analyzed comparatively across task categories.

The scenarios were designed to span a spectrum from objective and data-driven to subjective and emotionally sensitive, because that range was where the hypothesis predicted the most meaningful variation.

3 findings with direct design implications

1. Trust in AI is context-dependent. Participants expressed meaningfully higher trust in AI for objective, data-driven tasks. That trust dropped significantly when tasks involved nuance, subjectivity, or emotional stakes. AI isn't universally distrusted — it's situationally distrusted.

2. Humans are trusted more in ambiguous situations. When a task required empathy, judgment, or interpretation, participants consistently favored human agents. The more a situation felt personal, the more a human presence mattered.

3. Transparency shifts willingness to trust. Participants reported greater openness to AI when its limitations and oversight structures were clearly communicated. Transparency didn't eliminate skepticism, but it meaningfully reduced resistance.

What This Means for Design

What the Data Showed

Translating findings into practice

These findings aren't abstract. They point to concrete design decisions that teams building AI-powered products should be making:

Don't position AI as an authority in sensitive contexts. Users will resist it, and that resistance is well-founded.

Communicate scope and limitations clearly. Transparency is a trust-building feature, not a disclaimer.

Build in human oversight where trust is fragile. Especially in civic, health, and social service contexts where the stakes of a wrong decision are high.

Align AI capabilities with user expectations. A system that does more than users expect it to can feel just as untrustworthy as one that does less.

The central design principle this research points to: contextual humility matters more than capability maximization.

What the Data Showed

3 findings with direct design implications

1. Trust in AI is context-dependent. Participants expressed meaningfully higher trust in AI for objective, data-driven tasks. That trust dropped significantly when tasks involved nuance, subjectivity, or emotional stakes. AI isn't universally distrusted — it's situationally distrusted.

2. Humans are trusted more in ambiguous situations. When a task required empathy, judgment, or interpretation, participants consistently favored human agents. The more a situation felt personal, the more a human presence mattered.

3. Transparency shifts willingness to trust. Participants reported greater openness to AI when its limitations and oversight structures were clearly communicated. Transparency didn't eliminate skepticism, but it meaningfully reduced resistance.

What This Means for Design

Translating findings into practice

These findings aren't abstract. They point to concrete design decisions that teams building AI-powered products should be making:

Don't position AI as an authority in sensitive contexts. Users will resist it, and that resistance is well-founded.

Communicate scope and limitations clearly. Transparency is a trust-building feature, not a disclaimer.

Build in human oversight where trust is fragile. Especially in civic, health, and social service contexts where the stakes of a wrong decision are high.

Align AI capabilities with user expectations. A system that does more than users expect it to can feel just as untrustworthy as one that does less.

The central design principle this research points to: contextual humility matters more than capability maximization.

What This Means for Design

3 findings with direct design implications

1. Trust in AI is context-dependent. Participants expressed meaningfully higher trust in AI for objective, data-driven tasks. That trust dropped significantly when tasks involved nuance, subjectivity, or emotional stakes. AI isn't universally distrusted — it's situationally distrusted.

2. Humans are trusted more in ambiguous situations. When a task required empathy, judgment, or interpretation, participants consistently favored human agents. The more a situation felt personal, the more a human presence mattered.

3. Transparency shifts willingness to trust. Participants reported greater openness to AI when its limitations and oversight structures were clearly communicated. Transparency didn't eliminate skepticism, but it meaningfully reduced resistance.

How the research was structured

This was a quantitative survey study comparing trust perceptions toward AI and human agents across multiple real-world scenarios.

Participants represented a range of ages and backgrounds, allowing for comparison across trust contexts rather than demographic segmentation. Survey responses used Likert-scale trust measures and were analyzed comparatively across task categories.

The scenarios were designed to span a spectrum from objective and data-driven to subjective and emotionally sensitive, because that range was where the hypothesis predicted the most meaningful variation.

Reflection

Reflection

Why this research transfers beyond the classroom

This was an academic study, and I want to be clear about what that means: the findings are directional, not definitive. A larger, more diverse sample and longitudinal follow-up would be needed to draw stronger conclusions.

That said, the core insight holds up against broader literature on human-AI interaction: trust is contextual, transparency matters, and designing for user perception is not the same as designing for technical performance.

For me, this study sharpened something I carry into every project involving AI or data-driven systems. The question is never just "does this work?" It's "will the people it's meant to serve actually trust it enough to use it?" Those are different design problems, and conflating them is where a lot of AI products go wrong.

Why this research transfers beyond the classroom

This was an academic study, and I want to be clear about what that means: the findings are directional, not definitive. A larger, more diverse sample and longitudinal follow-up would be needed to draw stronger conclusions.

That said, the core insight holds up against broader literature on human-AI interaction: trust is contextual, transparency matters, and designing for user perception is not the same as designing for technical performance.

For me, this study sharpened something I carry into every project involving AI or data-driven systems. The question is never just "does this work?" It's "will the people it's meant to serve actually trust it enough to use it?" Those are different design problems, and conflating them is where a lot of AI products go wrong.

Reflection

Why this research transfers beyond the classroom

This was an academic study, and I want to be clear about what that means: the findings are directional, not definitive. A larger, more diverse sample and longitudinal follow-up would be needed to draw stronger conclusions.

That said, the core insight holds up against broader literature on human-AI interaction: trust is contextual, transparency matters, and designing for user perception is not the same as designing for technical performance.

For me, this study sharpened something I carry into every project involving AI or data-driven systems. The question is never just "does this work?" It's "will the people it's meant to serve actually trust it enough to use it?" Those are different design problems, and conflating them is where a lot of AI products go wrong.

Background

As AI gets embedded into more decisions that affect people's lives, most products assume users will trust it. But trust isn't a given. It's built, withheld, and highly dependent on context.

This study set out to understand how people actually form trust in AI versus human agents, and what that means for the products we design.

Why this research matters

AI-powered experiences are being deployed across healthcare, finance, social services, and civic systems, often in sensitive, high-stakes contexts. Many of these products are designed around capability, not perception.

But if users don't trust an AI system in the context it's being used, capability is irrelevant. The product fails before the feature ever runs.

Without clarity on how trust is formed or withheld across different situations, designers and product teams are building on assumptions rather than evidence.

As AI gets embedded into more decisions that affect people's lives, most products assume users will trust it. But trust isn't a given. It's built, withheld, and highly dependent on context.

This study set out to understand how people actually form trust in AI versus human agents, and what that means for the products we design.

Research Question and Hypothesis

Research Question Is there a meaningful difference in how people trust AI compared to humans, and does that difference shift depending on the type of task?

Hypothesis Participants would report lower trust in AI than in humans overall, with the gap widening for tasks involving judgment, emotion, or personal context.

Study Design

How the research was structured

This was a quantitative survey study comparing trust perceptions toward AI and human agents across multiple real-world scenarios.

Participants represented a range of ages and backgrounds, allowing for comparison across trust contexts rather than demographic segmentation. Survey responses used Likert-scale trust measures and were analyzed comparatively across task categories.

The scenarios were designed to span a spectrum from objective and data-driven to subjective and emotionally sensitive, because that range was where the hypothesis predicted the most meaningful variation.

The Problem

What the Data Showed

3 findings with direct design implications

1. Trust in AI is context-dependent. Participants expressed meaningfully higher trust in AI for objective, data-driven tasks. That trust dropped significantly when tasks involved nuance, subjectivity, or emotional stakes. AI isn't universally distrusted — it's situationally distrusted.

2. Humans are trusted more in ambiguous situations. When a task required empathy, judgment, or interpretation, participants consistently favored human agents. The more a situation felt personal, the more a human presence mattered.

3. Transparency shifts willingness to trust. Participants reported greater openness to AI when its limitations and oversight structures were clearly communicated. Transparency didn't eliminate skepticism, but it meaningfully reduced resistance.

What This Means for Design

Translating findings into practice

These findings aren't abstract. They point to concrete design decisions that teams building AI-powered products should be making:

Don't position AI as an authority in sensitive contexts. Users will resist it, and that resistance is well-founded.

Communicate scope and limitations clearly. Transparency is a trust-building feature, not a disclaimer.

Build in human oversight where trust is fragile. Especially in civic, health, and social service contexts where the stakes of a wrong decision are high.

Align AI capabilities with user expectations. A system that does more than users expect it to can feel just as untrustworthy as one that does less.

The central design principle this research points to: contextual humility matters more than capability maximization.

Reflection

Why this research transfers beyond the classroom

This was an academic study, and I want to be clear about what that means: the findings are directional, not definitive. A larger, more diverse sample and longitudinal follow-up would be needed to draw stronger conclusions.

That said, the core insight holds up against broader literature on human-AI interaction: trust is contextual, transparency matters, and designing for user perception is not the same as designing for technical performance.

For me, this study sharpened something I carry into every project involving AI or data-driven systems. The question is never just "does this work?" It's "will the people it's meant to serve actually trust it enough to use it?" Those are different design problems, and conflating them is where a lot of AI products go wrong.

Why this research matters

AI-powered experiences are being deployed across healthcare, finance, social services, and civic systems, often in sensitive, high-stakes contexts. Many of these products are designed around capability, not perception.

But if users don't trust an AI system in the context it's being used, capability is irrelevant. The product fails before the feature ever runs.

Without clarity on how trust is formed or withheld across different situations, designers and product teams are building on assumptions rather than evidence.

Background

As AI gets embedded into more decisions that affect people's lives, most products assume users will trust it. But trust isn't a given. It's built, withheld, and highly dependent on context.

This study set out to understand how people actually form trust in AI versus human agents, and what that means for the products we design.

Research Question and Hypothesis

The Problem

Why this research matters

AI-powered experiences are being deployed across healthcare, finance, social services, and civic systems, often in sensitive, high-stakes contexts. Many of these products are designed around capability, not perception.

But if users don't trust an AI system in the context it's being used, capability is irrelevant. The product fails before the feature ever runs.

Without clarity on how trust is formed or withheld across different situations, designers and product teams are building on assumptions rather than evidence.

Research Question Is there a meaningful difference in how people trust AI compared to humans, and does that difference shift depending on the type of task?

Hypothesis Participants would report lower trust in AI than in humans overall, with the gap widening for tasks involving judgment, emotion, or personal context.

Study Design

What the Data Showed

How the research was structured

This was a quantitative survey study comparing trust perceptions toward AI and human agents across multiple real-world scenarios.

Participants represented a range of ages and backgrounds, allowing for comparison across trust contexts rather than demographic segmentation. Survey responses used Likert-scale trust measures and were analyzed comparatively across task categories.

The scenarios were designed to span a spectrum from objective and data-driven to subjective and emotionally sensitive, because that range was where the hypothesis predicted the most meaningful variation.

Research Question and Hypothesis

Research Question Is there a meaningful difference in how people trust AI compared to humans, and does that difference shift depending on the type of task?

Hypothesis Participants would report lower trust in AI than in humans overall, with the gap widening for tasks involving judgment, emotion, or personal context.

Study Design

How the research was structured

This was a quantitative survey study comparing trust perceptions toward AI and human agents across multiple real-world scenarios.

Participants represented a range of ages and backgrounds, allowing for comparison across trust contexts rather than demographic segmentation. Survey responses used Likert-scale trust measures and were analyzed comparatively across task categories.

The scenarios were designed to span a spectrum from objective and data-driven to subjective and emotionally sensitive, because that range was where the hypothesis predicted the most meaningful variation.

3 findings with direct design implications

1. Trust in AI is context-dependent. Participants expressed meaningfully higher trust in AI for objective, data-driven tasks. That trust dropped significantly when tasks involved nuance, subjectivity, or emotional stakes. AI isn't universally distrusted — it's situationally distrusted.

2. Humans are trusted more in ambiguous situations. When a task required empathy, judgment, or interpretation, participants consistently favored human agents. The more a situation felt personal, the more a human presence mattered.

3. Transparency shifts willingness to trust. Participants reported greater openness to AI when its limitations and oversight structures were clearly communicated. Transparency didn't eliminate skepticism, but it meaningfully reduced resistance.

What This Means for Design

What the Data Showed

Translating findings into practice

These findings aren't abstract. They point to concrete design decisions that teams building AI-powered products should be making:

Don't position AI as an authority in sensitive contexts. Users will resist it, and that resistance is well-founded.

Communicate scope and limitations clearly. Transparency is a trust-building feature, not a disclaimer.

Build in human oversight where trust is fragile. Especially in civic, health, and social service contexts where the stakes of a wrong decision are high.

Align AI capabilities with user expectations. A system that does more than users expect it to can feel just as untrustworthy as one that does less.

The central design principle this research points to: contextual humility matters more than capability maximization.

What the Data Showed

3 findings with direct design implications

1. Trust in AI is context-dependent. Participants expressed meaningfully higher trust in AI for objective, data-driven tasks. That trust dropped significantly when tasks involved nuance, subjectivity, or emotional stakes. AI isn't universally distrusted — it's situationally distrusted.

2. Humans are trusted more in ambiguous situations. When a task required empathy, judgment, or interpretation, participants consistently favored human agents. The more a situation felt personal, the more a human presence mattered.

3. Transparency shifts willingness to trust. Participants reported greater openness to AI when its limitations and oversight structures were clearly communicated. Transparency didn't eliminate skepticism, but it meaningfully reduced resistance.

What This Means for Design

Translating findings into practice

These findings aren't abstract. They point to concrete design decisions that teams building AI-powered products should be making:

Don't position AI as an authority in sensitive contexts. Users will resist it, and that resistance is well-founded.

Communicate scope and limitations clearly. Transparency is a trust-building feature, not a disclaimer.

Build in human oversight where trust is fragile. Especially in civic, health, and social service contexts where the stakes of a wrong decision are high.

Align AI capabilities with user expectations. A system that does more than users expect it to can feel just as untrustworthy as one that does less.

The central design principle this research points to: contextual humility matters more than capability maximization.

What This Means for Design

3 findings with direct design implications

1. Trust in AI is context-dependent. Participants expressed meaningfully higher trust in AI for objective, data-driven tasks. That trust dropped significantly when tasks involved nuance, subjectivity, or emotional stakes. AI isn't universally distrusted — it's situationally distrusted.

2. Humans are trusted more in ambiguous situations. When a task required empathy, judgment, or interpretation, participants consistently favored human agents. The more a situation felt personal, the more a human presence mattered.

3. Transparency shifts willingness to trust. Participants reported greater openness to AI when its limitations and oversight structures were clearly communicated. Transparency didn't eliminate skepticism, but it meaningfully reduced resistance.

How the research was structured

This was a quantitative survey study comparing trust perceptions toward AI and human agents across multiple real-world scenarios.

Participants represented a range of ages and backgrounds, allowing for comparison across trust contexts rather than demographic segmentation. Survey responses used Likert-scale trust measures and were analyzed comparatively across task categories.

The scenarios were designed to span a spectrum from objective and data-driven to subjective and emotionally sensitive, because that range was where the hypothesis predicted the most meaningful variation.

Reflection

Reflection

Why this research transfers beyond the classroom

This was an academic study, and I want to be clear about what that means: the findings are directional, not definitive. A larger, more diverse sample and longitudinal follow-up would be needed to draw stronger conclusions.

That said, the core insight holds up against broader literature on human-AI interaction: trust is contextual, transparency matters, and designing for user perception is not the same as designing for technical performance.

For me, this study sharpened something I carry into every project involving AI or data-driven systems. The question is never just "does this work?" It's "will the people it's meant to serve actually trust it enough to use it?" Those are different design problems, and conflating them is where a lot of AI products go wrong.

Why this research transfers beyond the classroom

This was an academic study, and I want to be clear about what that means: the findings are directional, not definitive. A larger, more diverse sample and longitudinal follow-up would be needed to draw stronger conclusions.

That said, the core insight holds up against broader literature on human-AI interaction: trust is contextual, transparency matters, and designing for user perception is not the same as designing for technical performance.

For me, this study sharpened something I carry into every project involving AI or data-driven systems. The question is never just "does this work?" It's "will the people it's meant to serve actually trust it enough to use it?" Those are different design problems, and conflating them is where a lot of AI products go wrong.

Reflection

Why this research transfers beyond the classroom

This was an academic study, and I want to be clear about what that means: the findings are directional, not definitive. A larger, more diverse sample and longitudinal follow-up would be needed to draw stronger conclusions.

That said, the core insight holds up against broader literature on human-AI interaction: trust is contextual, transparency matters, and designing for user perception is not the same as designing for technical performance.

For me, this study sharpened something I carry into every project involving AI or data-driven systems. The question is never just "does this work?" It's "will the people it's meant to serve actually trust it enough to use it?" Those are different design problems, and conflating them is where a lot of AI products go wrong.

Background

As AI gets embedded into more decisions that affect people's lives, most products assume users will trust it. But trust isn't a given. It's built, withheld, and highly dependent on context.

This study set out to understand how people actually form trust in AI versus human agents, and what that means for the products we design.

Why this research matters

AI-powered experiences are being deployed across healthcare, finance, social services, and civic systems, often in sensitive, high-stakes contexts. Many of these products are designed around capability, not perception.

But if users don't trust an AI system in the context it's being used, capability is irrelevant. The product fails before the feature ever runs.

Without clarity on how trust is formed or withheld across different situations, designers and product teams are building on assumptions rather than evidence.

As AI gets embedded into more decisions that affect people's lives, most products assume users will trust it. But trust isn't a given. It's built, withheld, and highly dependent on context.

This study set out to understand how people actually form trust in AI versus human agents, and what that means for the products we design.

Research Question and Hypothesis

Research Question Is there a meaningful difference in how people trust AI compared to humans, and does that difference shift depending on the type of task?

Hypothesis Participants would report lower trust in AI than in humans overall, with the gap widening for tasks involving judgment, emotion, or personal context.

Study Design

How the research was structured

This was a quantitative survey study comparing trust perceptions toward AI and human agents across multiple real-world scenarios.

Participants represented a range of ages and backgrounds, allowing for comparison across trust contexts rather than demographic segmentation. Survey responses used Likert-scale trust measures and were analyzed comparatively across task categories.

The scenarios were designed to span a spectrum from objective and data-driven to subjective and emotionally sensitive, because that range was where the hypothesis predicted the most meaningful variation.

The Problem

What the Data Showed

3 findings with direct design implications

1. Trust in AI is context-dependent. Participants expressed meaningfully higher trust in AI for objective, data-driven tasks. That trust dropped significantly when tasks involved nuance, subjectivity, or emotional stakes. AI isn't universally distrusted — it's situationally distrusted.

2. Humans are trusted more in ambiguous situations. When a task required empathy, judgment, or interpretation, participants consistently favored human agents. The more a situation felt personal, the more a human presence mattered.

3. Transparency shifts willingness to trust. Participants reported greater openness to AI when its limitations and oversight structures were clearly communicated. Transparency didn't eliminate skepticism, but it meaningfully reduced resistance.

What This Means for Design

Translating findings into practice

These findings aren't abstract. They point to concrete design decisions that teams building AI-powered products should be making:

Don't position AI as an authority in sensitive contexts. Users will resist it, and that resistance is well-founded.

Communicate scope and limitations clearly. Transparency is a trust-building feature, not a disclaimer.

Build in human oversight where trust is fragile. Especially in civic, health, and social service contexts where the stakes of a wrong decision are high.

Align AI capabilities with user expectations. A system that does more than users expect it to can feel just as untrustworthy as one that does less.

The central design principle this research points to: contextual humility matters more than capability maximization.

Reflection

Why this research transfers beyond the classroom

This was an academic study, and I want to be clear about what that means: the findings are directional, not definitive. A larger, more diverse sample and longitudinal follow-up would be needed to draw stronger conclusions.

That said, the core insight holds up against broader literature on human-AI interaction: trust is contextual, transparency matters, and designing for user perception is not the same as designing for technical performance.

For me, this study sharpened something I carry into every project involving AI or data-driven systems. The question is never just "does this work?" It's "will the people it's meant to serve actually trust it enough to use it?" Those are different design problems, and conflating them is where a lot of AI products go wrong.

Why this research matters

AI-powered experiences are being deployed across healthcare, finance, social services, and civic systems, often in sensitive, high-stakes contexts. Many of these products are designed around capability, not perception.

But if users don't trust an AI system in the context it's being used, capability is irrelevant. The product fails before the feature ever runs.

Without clarity on how trust is formed or withheld across different situations, designers and product teams are building on assumptions rather than evidence.